[ad_1]

- Meta releases its AI models for video and images, Emu Video and Emu Edit.

- These latest tools can conjure stunning visuals from text, setting up a generative AI showdown.

On Thursday, Meta showed a sneak peek of its two newest AI tools, Emu Video and Emu Edit, providing the first real look at technology announced at Meta Connect in September.

Emu Video is a tool that lets users create videos from pure text prompts, while Emu Edit introduces a different approach to image editing known as inpainting.

Today we’re sharing two new advances in our generative AI research: Emu Video & Emu Edit.

Details ➡️ https://t.co/qm8aejgNtd

These new models deliver exciting results in high quality, diffusion-based text-to-video generation & controlled image editing w/ text instructions.

— AI at Meta (@AIatMeta) November 16, 2023

The introduction of Emu Video and Emu Edit is a strategic move for Meta, which it says still aligns with its broader vision for the Metaverse.

The company said these tools offer new creative capabilities designed to appeal to a wide range of users, from professional content creators to those simply looking for novel ways to express ideas.

Emu Video in particular demonstrates the company’s commitment to advancing AI-driven content generation—and could become a major competitor against popular names like Runway and Pika Labs, who have thus far dominated the space.

Read Also: OpenAI Plans To Integrate ChatGPT Into Educational Settings For Classrooms

Emu Video: Text-To-Video Creation

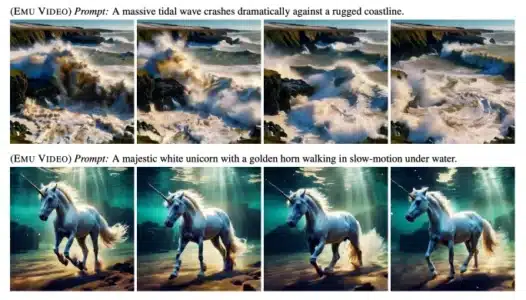

Emu Video adopts a two-step process for creating videos from text prompts. It first generates an image based on the inputted text, then produces a video derived from both the text and the generated image.

This approach simplifies the video generation process, avoiding the more complex, multi-model methods used to power Meta’s previous Make-A-Video tool.

The videos created by Emu Video are limited to 512×512 pixel resolution but show a remarkable coherence with the provided text prompts. Accurately converting text into visual narratives distinguishes Emu Video from most existing models and commercial solutions.

Although the models themselves are not publicly available, users can experiment with a set of predetermined prompts, and the results are pretty smooth, with minimum discrepancies between frames.

Emu Edit: Image Editing With Inpainting

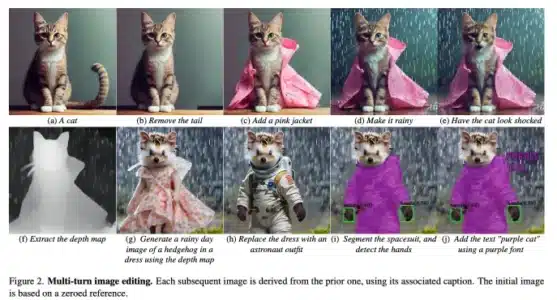

Alongside Emu Video, Meta also showcased the capabilities of Emu Edit, an AI-driven tool designed to perform various image editing tasks based on AI’s interpretation of natural language instructions. Emu Edit allows users to edit images with high levels of precision and flexibility.

“Emu Edit [is] a multi-task image editing model which sets state-of-the-art results in instruction-based image editing,” Meta’s research paper for the tool says, underscoring its ability to execute complex editing instructions accurately.

Emu Edit’s precision is enhanced by using diffusers, an advanced AI technology popularized by Stable Diffusion. This approach ensures that edits maintain the visual integrity of the original images.

Meta’s focus on developing AI tools like Emu Video and Emu Edit embodies its strategy to create technologies crucial to creating the Metaverse. This includes the development of Meta AI, a personal assistant powered by the LLaMA-2 large language model, and the introduction of multimodality in AR devices.

[ad_2]

Source link